Though Shareaholic is hosted in the AWS cloud, we avoid depending on Amazon’s virtualized cloud services whenever possible. If we ever hit a bottleneck in AWS, I want to be able to switch providers without needing to rebuild a core piece of our architecture. I also don’t want our tech team to have to make product and infrastructure sacrifices just so that we conform to AWS standard practices.

Load balancing with HAProxy was the first example of a service that Amazon provides, that we felt was better to manage ourselves.

Here’s how we did it.

Why HAProxy?

I’ve used HAProxy nonstop since 2009, and to date it has never been the culprit of a single problem I’ve experienced. It is extremely resource efficient and it is impeccably maintained by Willy Tarreau.

It provides both HTTP and TCP load balancing, and a powerful feature set not found in Amazon’s ELB.

Monitoring

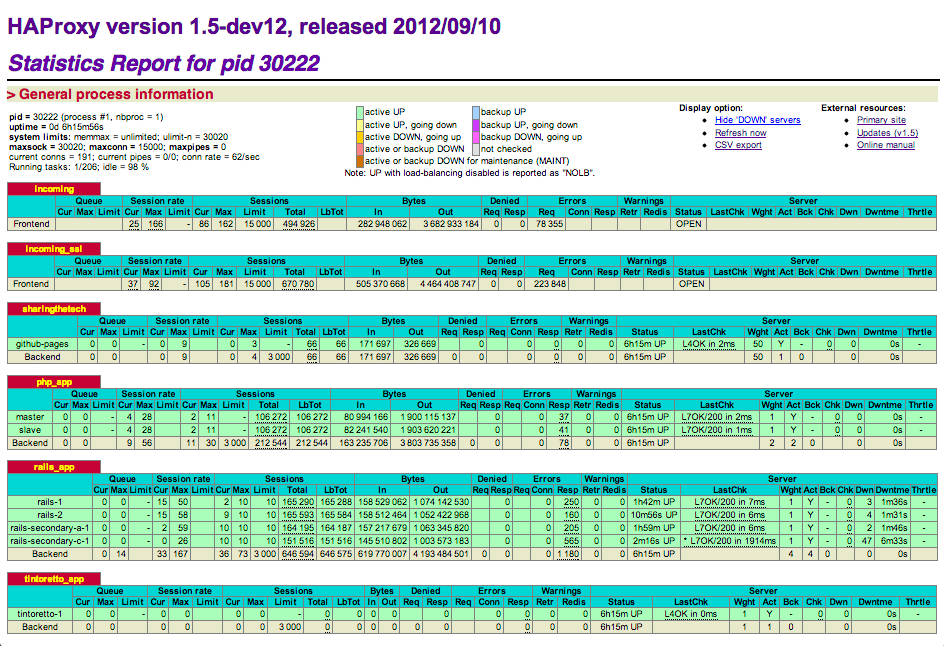

HAProxy provides a dashboard with global stats, server stats, and aggregate stats for each frontend and backend. On a single page you can see which servers are busiest, which ones are up and which ones are down. You can also view this data at a service level, so if you have a three node cluster and only two of the servers were down at any point in time, HAProxy correctly summarizes that you did not experience any downtime.

While writing this post, one of our rails boxes had degraded performance due to too many background jobs running. Using the HAProxy dashboard, I quickly spotted it. You probably can too:

(Mouseover the image for the spoiler).

Configurability

HAProxy is highly configurable. You have over a half dozen load balancing algorithms to choose from. You can provide relative weighting for different servers in case you have boxes of differing resources. You can specify small health check timeouts on servers that should resond quickly and longer ones for servers that are slower. You can set these preferences globally, on a per-backend basis or on a per-server basis.

Requests as Mutable Objects

We often think of a web requests as being static, but with HAProxy we can bend them to our will to do important things.

Let’s start with the prototypical load balancer example: adding an X-Forwarded-Proto header so that our app server knows it’s receiving an SSL request even though it’s coming through on port 80:

reqadd X-Forwarded-Proto:\ https

Now let’s try something we can’t do with ELB: perform HTTP Basic Authentication before a request hits our app server.

userlist admins

user myusername insecure-password mypassword

frontend restricted_cluster

acl auth_tintoretto http_auth(admins)

http-request auth realm ShareaholicRestricted

Any web request being routed to restricted_cluster will need to contain valid HTTP auth credentials in order for that request to be sent to an application server. At Shareaholic we use this for our internal products and dashboards. This lets us manage authentication in a single place, and prevents us from needing to worry about it at the application level.

Frontends

HAProxy supports an infinite number of independently configurable load balancing frontends. In addition to HTTP load balancing, it can be used in TCP mode for general purpose load balancing. This means that if you have a redis database with three read slaves, you can use the same HAProxy instance that balances your web traffic to balance your redis traffic.

Backends and ACLs

Backends and ACLs enable you to host large-scale web sites and web services in ways not possible with simple request forwarding.

For example, did you know that the shareaholic.com domain is hosted partly in a PHP cluster, partly in a Rails cluster, partly in a Python cluster and partly as static content on github.com? We accomplish this with backends and ACLs.

First, we define an ACL based on the path of the URL request. An abbreviated example:

# Tech blog traffic goes to tech blog

acl techblog path_beg /tech

use_backend sharingthetech if techblog

# Other traffic goes to rails app

default_backend rails_app

Then we define backends composed of one or more servers to handle the different kinds of traffic:

backend rails_app :80

option httpchk /haproxy_health_check

server rails-1 10.1.1.1:8080

server rails-2 10.1.1.2:8080

server rails-3 10.1.1.3:8080

backend sharingthetech :80

reqirep ^Host: Host:\ shareaholic.com

server github-pages shareaholic.github.com:80

There are two things to note in this example:

First, our rails servers are configured to receive their health checks at /haproxy_health_check. These requests are handled by a proc in our routes.rb. We chose this rather than a static file on disk because we want the rails stack to be invoked during the health check; if apache is up but rails isn’t working, we want the health check to fail.

Second, Github pages only works for a single host (CNAME), so in our sharingthetech backend, we rewrite the Host header to ensure it is shareaholic.com, which is what’s contained in our Github Pages CNAME file.

Note: Since writing this post we have moved our tech blog from http://shareaholic.com/tech to http://tech.shareaholic.com. This example illustrates how we had hosted it at http://shareaholic.com/tech.

Shareaholic’s HAProxy Production Setup

Deployment

We run HAProxy on EC2 small boxes backed by instance storage. We do not back up these instances because Chef generates the config files on the fly. We use a custom fork of the haproxy cookbook that provides support for compiling HAProxy from source. We use the latest 1.5-dev12 build.

The shareaholic.com A record points to an Elastic IP address, which we assign to our load balancer. A second load balancer is always running; if the primary load balancer goes down, we simply reassign the Elastic IP address to our backup load balancer.

Because HAProxy consumes negligible CPU cycles and memory when not in use, we save money by avoiding a single tenant backup balancer. Instead we run our backup balancer on a utility box that is doing other work, and that utility box doubles as a hot standby that can handle traffic in a pinch.

HAProxy Configuration

Here is our production haproxy.cfg. The only changes are 1) redactions to protect authentication credentials and 2) Changes to IP addresses and paths to protect potential attack vectors:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 | |

SSL Termination

Terminating SSL at the Load Balancer: Why?

Many web sites defer SSL termination to the app servers that handle the individual requests. Because of the way we route traffic at Shareaholic, this is not possible for us. In order to choose a backend based on the request’s path or its Host header, we need to be able to inspect the request. This is only possible once the request has been decrypted.

An additional benefit of terminating SSL at the load balancer is the operational DRY it provides. Rather than configure SSL termination for JBoss and Apache and nginx and any other app server we run, we can configure it in one place. When it’s time to update our SSL keys, we just drop them into that one place and redeploy the box.

How We Terminate SSL

As of build 1.5-dev12 (September 10, 2012), HAProxy can be configured to perform SSL termination. We however use stud for this task because HAProxy did not have this feature when we began using it. We considered the more popular stunnel but found that stud provided substantially better performance. This is very important to us because SSL termination is the most expensive part of load balancing, and we want to squeeze as many connections as possible out of our EC2 boxes.

I’ve open sourced the stud Chef cookbook that I wrote for deploying stud to our production servers. Even if you don’t use Chef, that cookbook is instructive in how to get stud up and running quickly.

Performance and Scalability

While writing this post, HAProxy reports 161 concurrent connections with a new connection rate of 67/second. It is using about 4.1% of the single EC2 compute unit allocated to our EC2 small box.

stud is consuming an additional 9.7% of the CPU. We force SSL on all pages, so that is with near-100% of traffic flowing over SSL. (I say “near” to account for initial HTTP requests being redirected to their HTTPS equivalents). Based on these numbers, a single EC2 compute unit can handle 1167 concurrent connections, with an arrival rate of 486 connections/second. (RAM is a non-factor; combined, HAProxy and stud are consuming only 73.6 MB).

We can scale vertically on AWS until we need more than 88 EC2 compute units. That means we won’t need to tackle horizontal scalability until we are handling over 100,000 concurrent connections or accepting over 40,000 new connections per second.

If you’re fortunate enough to have that problem, the simplest way to scale horizontally is with Round-Robin DNS. This enables you to point your A record to multiple load balancers straight from your DNS.

Go Give It a Try!

Among our Chef cookbooks and the configuration posted above, we’ve open sourced everything you need to build and deploy your own load balancer, complete with SSL termination and examples for path-based and domain-based routing.

If you’ve ever wanted to host your blog on your application domain, or if you’re trying to avoid yet another provider-specific dependency in your tech stack, I encourage you to give this a try and see how easy it is to do load balancing yourself.